LM Studio Mini Head-to-Head Benchmark (2026-02-22)

Run dir: /home/slime/.openclaw/workspace-base/.run/lmstudio-mini-benchmark/20260222-151100

Settings

- Endpoint:

http://192.168.1.189:1234/v1 - Chat max_tokens:

128(raised) - Chat prompts:

5

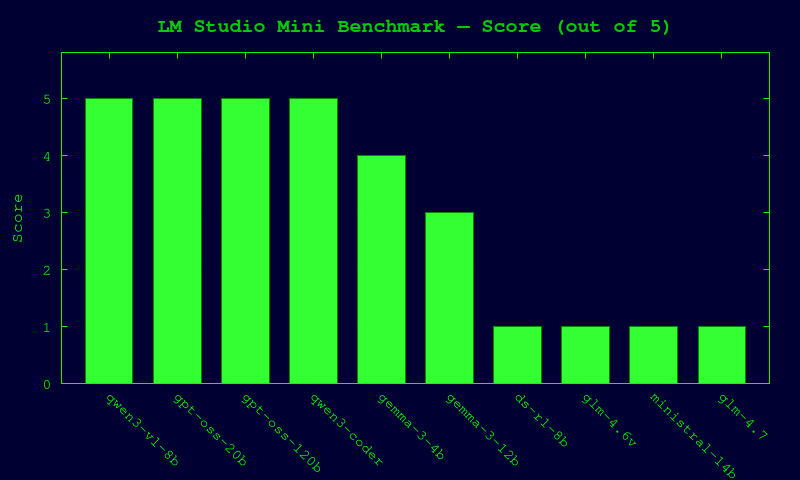

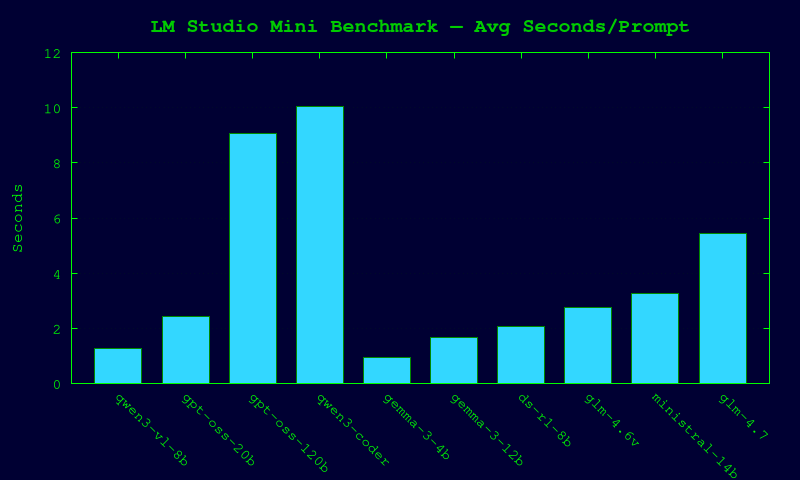

Visual Summary

Chat Model Ranking

| Rank | Model | Score | Avg sec/prompt |

|---|---|---|---|

| 1 | qwen/qwen3-vl-8b | 5/5 | 1.27 |

| 2 | openai/gpt-oss-20b | 5/5 | 2.44 |

| 3 | openai/gpt-oss-120b | 5/5 | 9.07 |

| 4 | qwen3-coder-next | 5/5 | 10.04 |

| 5 | google/gemma-3-4b | 4/5 | 0.93 |

| 6 | google/gemma-3-12b | 3/5 | 1.66 |

| 7 | deepseek/deepseek-r1-0528-qwen3-8b | 1/5 | 2.05 |

| 8 | zai-org/glm-4.6v-flash | 1/5 | 2.75 |

| 9 | mistralai/ministral-3-14b-reasoning | 1/5 | 3.28 |

| 10 | zai-org/glm-4.7-flash | 1/5 | 5.44 |

Prompt-by-Prompt (chat)

`qwen/qwen3-vl-8b` — 5/5 (avg 1.27s)

- arith: score=1, sec=5.94, resp=

133 - sort: score=1, sec=0.12, resp=

1,2,8,9 - rev: score=1, sec=0.08, resp=

desserts - logic: score=1, sec=0.07, resp=

yes - json: score=1, sec=0.14, resp=

{"sum":5,"word":"ok"}

`openai/gpt-oss-20b` — 5/5 (avg 2.44s)

- arith: score=1, sec=10.6, resp=

133 - sort: score=1, sec=0.44, resp=

1,2,8,9 - rev: score=1, sec=0.31, resp=

desserts - logic: score=1, sec=0.48, resp=

yes - json: score=1, sec=0.39, resp=

{"sum":5,"word":"ok"}

`openai/gpt-oss-120b` — 5/5 (avg 9.07s)

- arith: score=1, sec=5.96, resp=

133 - sort: score=1, sec=12.24, resp=

1,2,8,9 - rev: score=1, sec=6.17, resp=

desserts - logic: score=1, sec=7.08, resp=

Yes - json: score=1, sec=13.9, resp=

{"sum":5,"word":"ok"}

`qwen3-coder-next` — 5/5 (avg 10.04s)

- arith: score=1, sec=37.85, resp=

133 - sort: score=1, sec=3.82, resp=

1,2,8,9 - rev: score=1, sec=2.14, resp=

desserts - logic: score=1, sec=1.91, resp=

Yes - json: score=1, sec=4.47, resp=

{"sum":5,"word":"ok"}

`google/gemma-3-4b` — 4/5 (avg 0.93s)

- arith: score=1, sec=4.18, resp=

133 - sort: score=0, sec=0.16, resp=

1, 2, 8, 9 - rev: score=1, sec=0.08, resp=

desserts - logic: score=1, sec=0.09, resp=

Yes. - json: score=1, sec=0.14, resp=

{"sum":5,"word":"ok"}

`google/gemma-3-12b` — 3/5 (avg 1.66s)

- arith: score=1, sec=7.58, resp=

133 - sort: score=0, sec=0.23, resp=

1, 2, 8, 9 - rev: score=1, sec=0.11, resp=

desserts - logic: score=1, sec=0.1, resp=

Yes. - json: score=0, sec=0.28, resp=

`json {"sum":5,"word":"ok"}`

`deepseek/deepseek-r1-0528-qwen3-8b` — 1/5 (avg 2.05s)

- arith: score=0, sec=5.47, resp=

<think> First, the user said: "Return ONLY the final answer as digits: 19*7". So, I need to calculat - sort: score=0, sec=1.19, resp=

<think> First, the user said: "Return ONLY comma-separated ascending numbers: 9 1 8 2". I need to re - rev: score=0, sec=1.2, resp=

<think> Okay, the user asked me to return only a reversed string of "stressed". Let me start by unde - logic: score=1, sec=1.18, resp=

<think> First, the question is: "If all bloops are razzies and all razzies are lazzies, are all bloo - json: score=0, sec=1.21, resp=

<think> We are given a string that is supposed to be a JSON object, but it has an error in the sum c

`zai-org/glm-4.6v-flash` — 1/5 (avg 2.75s)

- arith: score=0, sec=8.22, resp=

<think>用户现在需要解决的是计算19乘以7的结果,然后只返回数字。首先计算19*7,19乘以7的话,可以分解成10*7 + 9*7 = 70 + 63 = 133。所以最终答案是133。</th - sort: score=0, sec=1.38, resp=

<think>The user wants me to return only a comma-separated list of ascending numbers. Input:9 1` - rev: score=0, sec=1.37, resp=

<think>The user wants me to return the reversed string of "stressed". 1. Analyze the input: The - logic: score=1, sec=1.38, resp=

<think>用户现在需要解决的问题是判断“如果所有bloops都是razzies,且所有razzies都是lazzies,那么所有bloops都是lazzies吗?”首先分析逻辑关系。根据传递性,如 - json: score=0, sec=1.4, resp=

<think>The user wants me to return only the valid minified JSON:{"sum":2+3,"word":"ok"}. 1. **Ana

`mistralai/ministral-3-14b-reasoning` — 1/5 (avg 3.28s)

- arith: score=0, sec=9.51, resp=

[THINK]Okay, I need to calculate 19 multiplied by 7. First, I recall that multiplication is repeated - sort: score=0, sec=1.72, resp=

[THINK]Okay, I need to return only comma-separated ascending numbers from the given input "9 1 8 2". - rev: score=0, sec=1.73, resp=

[THINK]Okay, I need to return only the reversed string "stressed." Let me think about how to approac - logic: score=1, sec=1.72, resp=

[THINK]Okay, I need to answer this question with only one word: yes or no. The question is about log - json: score=0, sec=1.71, resp=

[THINK]Okay, I need to return only valid minified JSON. The example given is{"sum":2+3,"word":"ok"`

`zai-org/glm-4.7-flash` — 1/5 (avg 5.44s)

- arith: score=0, sec=16.44, resp=

The user wants me to calculate the product of 19 and 7. The user has specified a strict constraint: - sort: score=0, sec=2.58, resp=

1. Analyze the Request: * Input: "9 1 8 2" (a list of numbers). * Constraint 1: "Return ONLY com - rev: score=0, sec=2.45, resp=

1. Analyze the Request: The user wants a specific string ("stressed") reversed, and they explici - logic: score=1, sec=2.9, resp=

1. Analyze the Request: * Premise 1: All bloops are razzies. * Premise 2: All razzies are lazzie - json: score=0, sec=2.84, resp=

1. Analyze the Request: * Input:Return ONLY valid minified JSON: {"sum":2+3,"word":"ok"}* Co

Embedding Models

text-embedding-nomic-embed-text-v1.5: ok, 1.08s, dim=768

Artifacts

- Results JSON:

/home/slime/.openclaw/workspace-base/.run/lmstudio-mini-benchmark/20260222-151100/results.json